Join MetricStream and DuploCloud for a data-backed session exploring how agentic AI is reshaping DevOps practices to make teams faster, more compliant, and more resilient.

It’s 2026, and DevOps teams are doing way more than writing scripts and reacting to tickets. They’re now building and managing AI agents to work alongside their AI engineers. This means they can continuously monitor systems and resolve issues quickly and efficiently.

In a recent webinar session, Venkat Thiruvengadam, CEO and Founder of Duplocloud, and Vallinayagam Nallaperumal, Vice President of Strategic Technology and Cloud Operations at Metricstream, shared how agentic AI is reshaping the world of DevOps.

They’re seeing teams get more proactive, faster at resolving issues, and better governed.

Here, we take a peek into their conversation about what DevOps actually looks like with AI agents in 2026.

How AI Is Changing the DevOps Model

To really understand where DevOps is heading, it helps to look at where it’s been.

Traditionally, the way DevOps works is that your engineering team would go into an environment with a set of requirements and a request. Maybe they need an application, a SQL database, or to integrate third-party software.

The DevOps team would then interpret the task, convert it to scripts, and deploy it.

Then the team must test and iterate.

Of course, requirements rarely stay fixed. They evolve. So your team grows. And your system becomes more complex, and your compliance rules get tighter.

In short, your DevOps team ends up spending a ton of time in reactive mode: modifying scripts, reworking deployments, and managing all the moving parts.

In 2026, that reactive mode is shifting.

Of course, requirements rarely stay fixed. They evolve. So your team grows. And your system becomes more complex, and your constraints get tighter.

In short, your DevOps team ends up spending a ton of time in reactive mode: modifying scripts, reworking deployments, and managing all the moving parts.

In 2026, that reactive mode is shifting.

Now, instead of DevOps teams reacting to specific requirements, they build an AI engineer. Engineering teams can ask for something from DevOps, and DevOps can ask the LLM, an AI agent.

The AI agent can:

- Ask clarifying questions (like which environment you mean)

- Research best practices via dynamic research from the internet and documentation

- Draft infrastructure changes as recommended actions

- Propose commands and request approval before running them

- Document its findings (in a report with recommended actions)

So we’ve moved DevOps teams from writing scripts and constantly going back and forth to building the AI engineers that can do all that work for them, with access to all the knowledge on the internet.

Now, your DevOps team is designing and supervising super intelligent system to execute tasks for them.

They were scriptwriters.

Now they’re orchestrators of AI talent.

Reaction Is a Thing of the Past

As AI transforms development workflows, it reshapes operations even more dramatically.

Traditionally:

- Operations teams respond to alerts

- Something breaks

- A ticket is filed

- Engineers investigate

- Remediation begins

It’s a step-by-step process you’re likely used to.

AI agents introduce a continuous model.

Instead of waiting for problems, agents monitor infrastructure in real time. Now, they can break down information silos across monitoring tools, logs, ticketing systems, and cost platforms.

They’ll also identify issues and flag configuration drift.

Our favorite bonus is that they’re maybe even starting to predict potential failures and mitigate them before they occur.

Here’s where the AI-powered Site Reliability Engineering (AI SRE) comes into play. AI SRE systems are largely focused on troubleshooting operations.

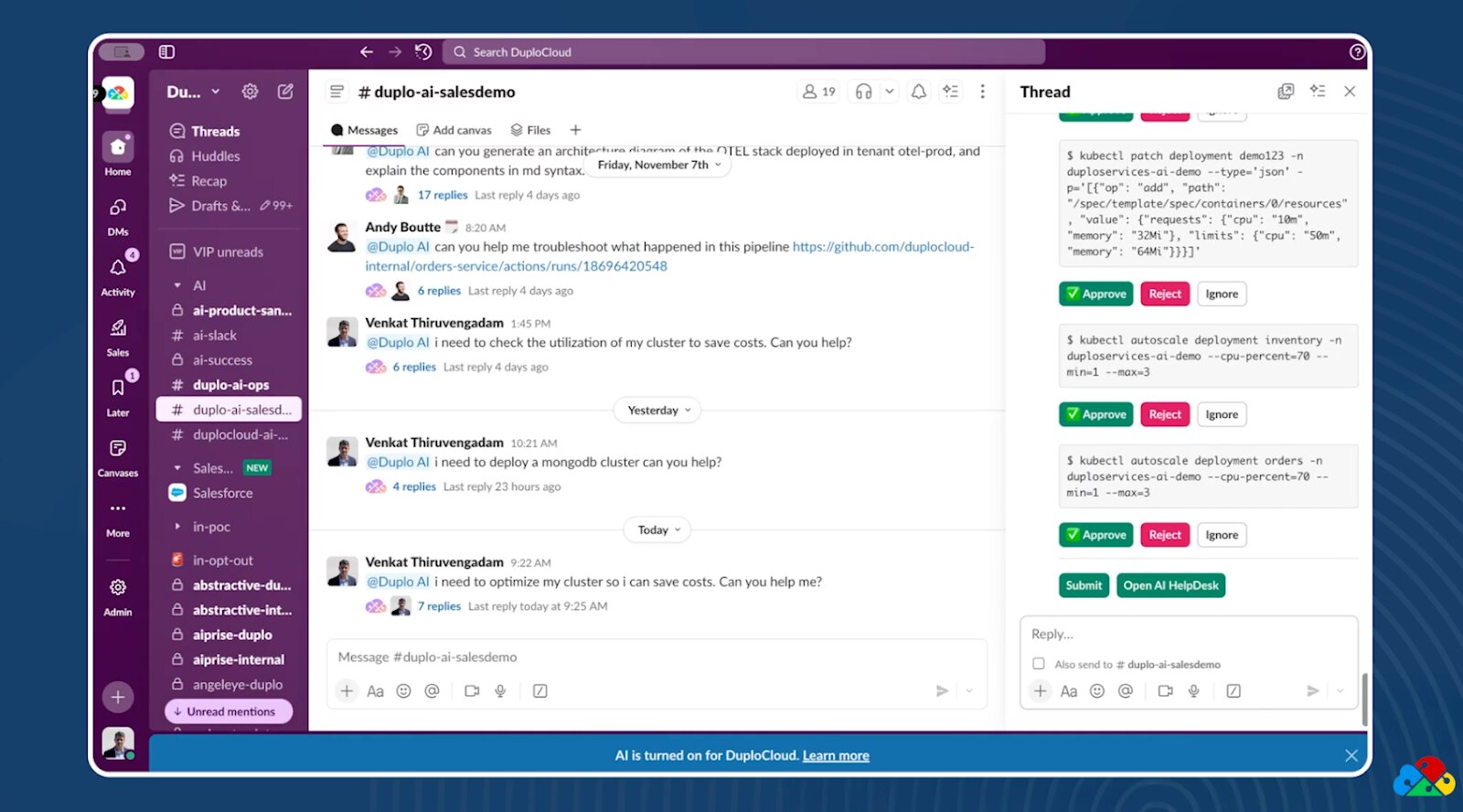

In some cases, they can help remediate, as long as they have proper approvals and guardrails, of course.

The difference?

Speed.

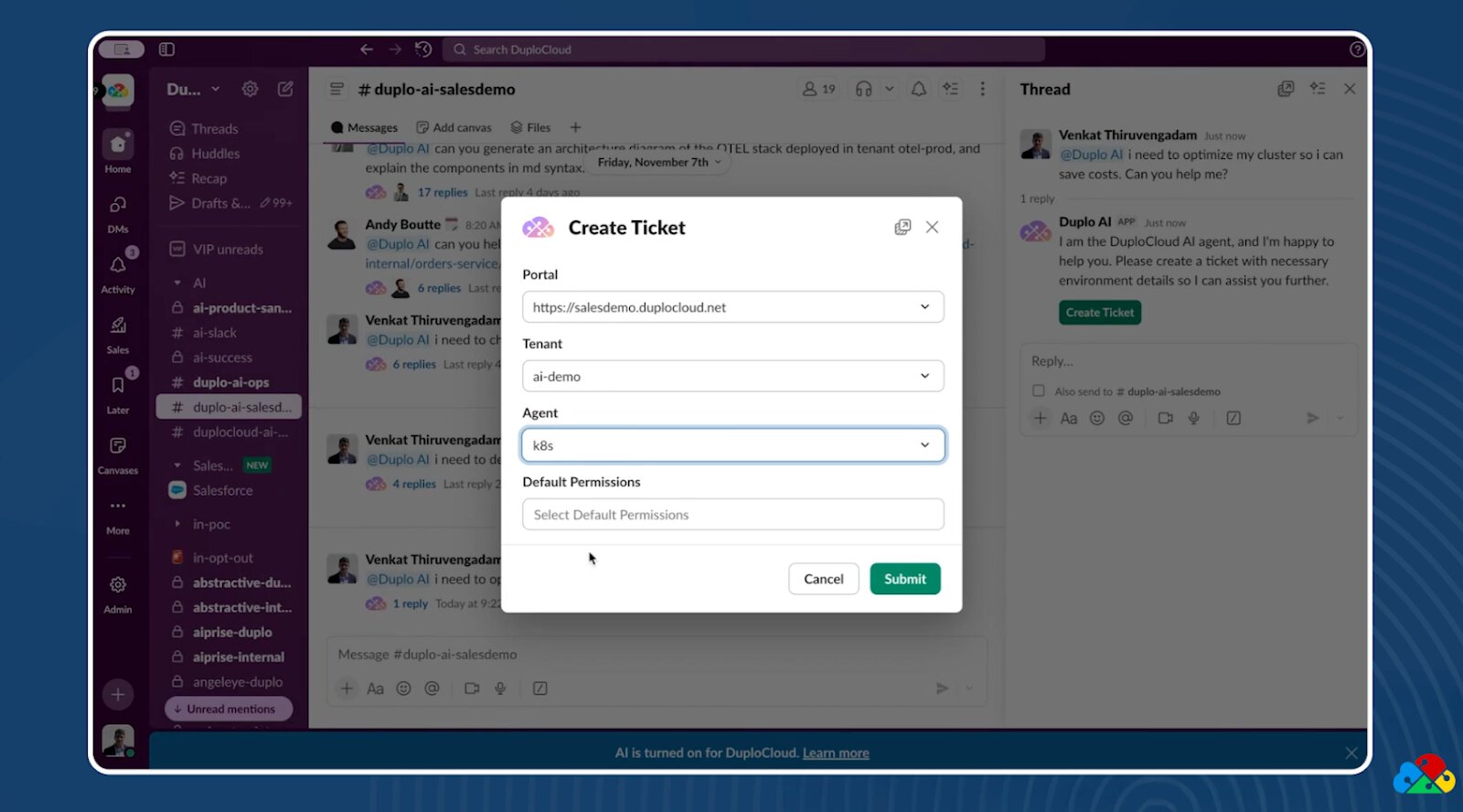

Consider a cost optimization question posted in Slack. A year ago, it might have sat for half a day or longer before someone reviewed it.

Today, an AI agent can pick up the request immediately, confirm the environment and agent context, validate permissions, ask approval for commands, analyze infrastructure data, and generate a detailed report.

And it takes just minutes.

Working from Experience Rather Than Scratch x

Another advantage of AI agents is that they can accumulate knowledge.

Where human engineers rely on their own experience, AI agents can rely on past issues and past solutions.

When connected to internal documentation, historical tickets, and the internet, AI agents can reference how similar problems were solved in the past. They don’t start from scratch. They pattern-match, see similarities, and suggest solutions based on a wealth of history.

In many cases, engineers don’t even need to write custom code to address common issues. With access to engineering, development, and operations data, AI agents can find the most likely solution quickly.

This helps cut mean time to resolution (MTTR) and lets DevOps teams focus on higher-order challenges.

The days of repetitive troubleshooting are starting to fade.

Let’s Talk About the Middle Layer

As enterprises adopt AI agents, a critical question comes up over and over: how much should we build internally, and how much should we rely on vendors?

The answer, of course, lies somewhere in the middle.

Large language models are powerful knowledge engines. But they can’t safely execute changes inside your infrastructure on their own. An LLM can suggest a deployment command, but it can’t validate credentials, enforce permissions, or ensure guardrails are met.

That’s where a middle orchestration layer becomes essential.

This layer verifies identity, checks access controls, defines allowed endpoints, and ensures guardrails are applied before actions are taken.

Vendors like DuploCloud specialize in building this orchestration framework. We are integrating AI recommendations with tools like Kubernetes or AWS, all while enforcing permissions and guardrails.

Enterprises, meanwhile, can build their own AI agents tailored to their specific internal workflows and domain-specific needs.

In practice, the most effective strategy will blend both approaches.

You can take vendor-supported orchestration and add it to enterprise-specific agent development and get the best of both worlds.

Managing AI As a Kind of HR

When we talk about AI, we inevitably have to talk about governance.

AI is powerful, and, yes, it can speed up your workflows.

But it can also make big, big mistakes.

Organizations have to be concerned about:

- Rising AI usage costs

- Errors spreading rapidly across environments

- Multiple agents acting without coordination

- Hallucinations that can influence automated decisions

The first 80 to 90% of deployment tasks may be straightforward. The final 10%, those production-grade integration nuances and edge cases, is where risk can ruin everything.

As a result, companies are implementing oversight models that look a lot like human resource management. AI agents are assigned scopes, and they operate within defined permissions.

And you’ll have teams monitoring AI performance and reviewing their outputs.

AI is treated effectively as a member of the team; it requires supervision just like anyone else.

But… How Can You Measure ROI?

With significant investment flowing into AI, executives understandably want measurable returns.

At DuploCloud, we frame ROI in operational efficiency: reduce labor overhead, minimize tool sprawl, and accelerate DevOps outcomes. And do it all without scaling headcount.

At MetricStream, ROI is outcome-based: we look at lower MTTR, stronger SLA adherence, improved issue detection, and tighter control over configuration drift.

But ultimately, success goes far beyond technical metrics.

Are customers happier? Are SLAs improving? Is revenue growing?

AI in DevOps has to come down to tangible business improvement… every time.

Where Do the Humans Go?

In both DuploCloud and MetricStream, we’re constantly talking about what AI means to our human staff.

The conversation always comes down to whether or not humans will lose their jobs to AI.

But history has shown us that technology leads to an evolution rather than an elimination.

Remember, there was a time when Java wasn’t even a language. Today, it’s foundational.

Similarly, AI literacy is becoming a core competency.

All this to say that DevOps roles aren’t disappearing. They’re shifting:

- From writing repetitive scripts to designing intelligent systems

- From reacting to incidents to shaping proactive architectures

- From executing tasks to governing AI-driven workflows

The key today is to remain adaptable and open.

The 2026 Structural Shift of DevOps

In 2026, DevOps looks different. It just does.

AI agents are now able to continuously monitor environments, and engineers can interact conversationally with their infrastructure.

Those agents can analyze tickets in mere minutes, and remediation is automated… but governed.

We apply guardrails, and we contain drift before it spreads.

DevOps teams are now defined by their ability to orchestrate intelligence.

The shift, as we see it, is a structural one.

And the organizations that embrace AI agents, paired with strong human oversight, are the ones building DevOps functions that are both faster and more proactive and better governed.

Watch the full webinar here.